|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 |

version: '3.4' services: rabbitmq: image: docker.io/rabbitmq:management command: #启动之后ping一下www.baidu.com - "ping" - "www.baidu.com" networks: - "shooter_cluster" deploy: endpoint_mode: vip # restart_policy: condition: on-failure environment: #设置环境变量(注意这些变量是要传给程序内部的) RABBITMQ_ERLANG_COOKIE: "secret string" RABBITMQ_NODENAME: rabbitmq ports: - "4369:4369" - "5671:5671" - "5672:5672" - "15671:15671" - "15672:15672" - "25672:25672" configs: #配置文件调用 - source: rabbitmq_config target: /etc/rabbitmq/rabbitmq.config - source: definitons_json target: /etc/rabbitmq/definitions.json redis: image:docker.io/redis:latest networks: - "shooter_cluster" deploy: endpoint_mode: dnsrr #只允许内部容器间通过server名称互相调用访问,而不是给外部服务调用访问的(如果我们的服务不需要给外部访问,最好定义成dnsrr这种形式) replicas: 2 #运行副本个数 resources: #资源限制 limits: cpus: "0.1" memory: 50M restart_policy: #重启策略 condition: on-failure placement: #指定运行节点 constaints: [ node.role == manager ] healthcheck: #启动后的访问检查 test: [ "CMD", "CURL", "-f" "http://www.momo.com" ] interval: 60s timeout: 10s retries: 3 depends_on: #依赖对象 - rabbitmq - other ports: - "6379:6379" #services同级声明一下外部网络,外部已经提前创建定义好了 networks: shooter_cluster: external: true #应该是使用yaml脚本自行创建外部网络 networks: default: external: name: shooter_cluster networks: front-tier: driver: bridge back-tier: driver: bridge #设置全局配置文件 configs: rabbitmq_config: file: ./rabbitmq.config definitons_json: file: ./definitions.json 关于这个networks标签还有一个特别的子标签aliases,这是一个用来设置服务别名的标签,例如: services: some-service: networks: some-network: aliases: - alias1 - alias3 other-network: aliases: - alias2 #各个参数表达意思 fms-c-agent: #第一个服务 image: 172.30.30.24:5000/fms-c-agent:1.0.1 ports: #暴露到外部的端口与内部关联端口 - "8891:8891" networks: #加入用户自定义网络 - "shooter_cluster" deploy: # restart_policy: condition: on-failure #容器退出代码错误时重启 depends_on: #依赖关系(所依赖的容器要先启动) - fms-a-center - fms-b-config environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=8891 volumes: #定义或者调用存储卷 - /data:/data |

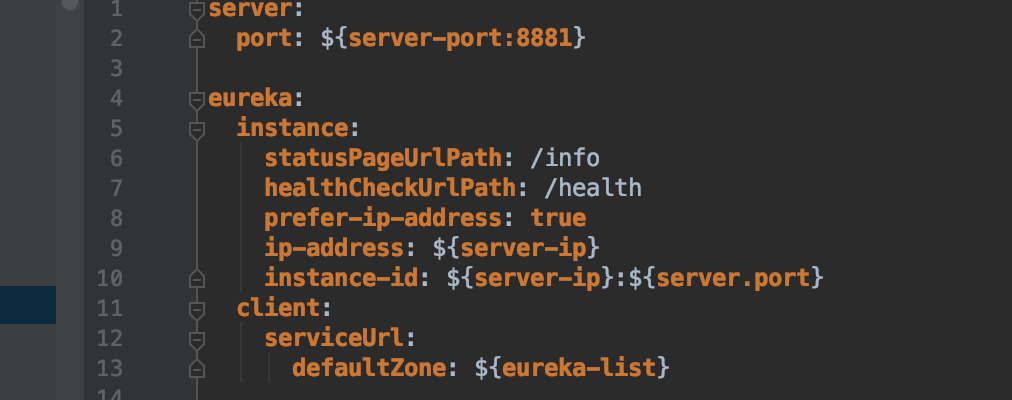

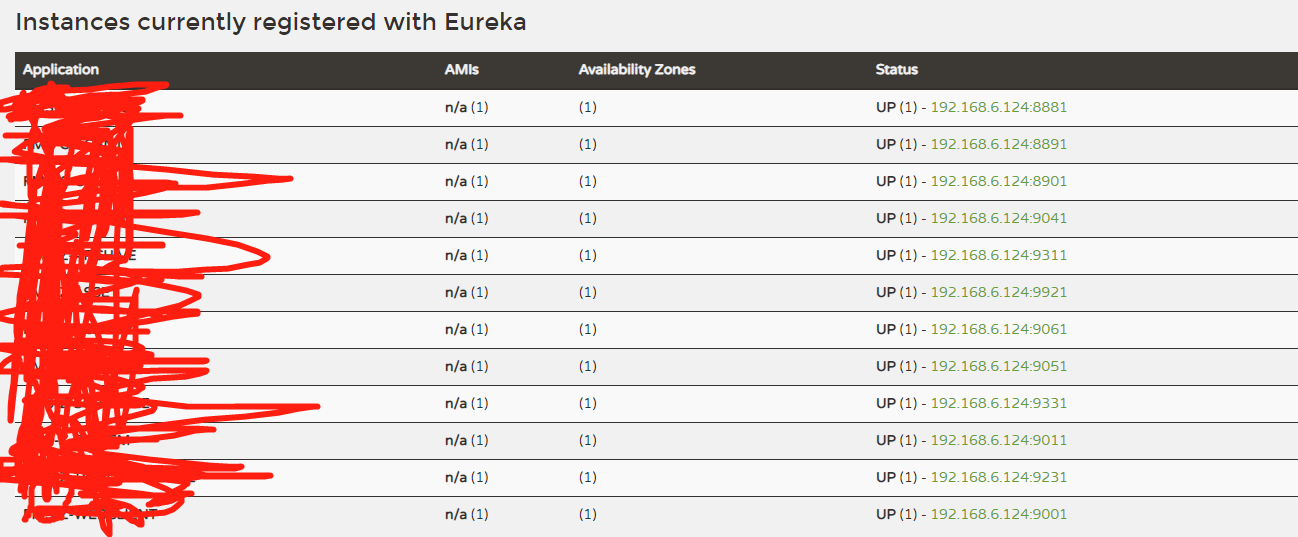

config微服务注册到eureka(线上实例)

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 308 309 310 311 312 313 314 315 316 317 318 319 320 |

version: "3.4" services: rabbitmq: image: docker.io/rabbitmq:3-management networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure environment: RABBITMQ_ERLANG_COOKIE: "secret string" RABBITMQ_NODENAME: rabbitmq ports: - "4369:4369" - "5671:5671" - "5672:5672" - "15671:15671" - "15672:15672" - "25672:25672" configs: - source: rabbitmq_config target: /etc/rabbitmq/rabbitmq.config - source: definitons_json target: /etc/rabbitmq/definitions.json redis: image: docker.io/redis:latest networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure ports: - "6379:6379" fms-a-center: image: 172.30.30.24:5000/fms-a-center:1.0.1 networks: - "shooter_cluster" privileged: true deploy: restart_policy: condition: on-failure ports: - "8761:8761" environment: FETCH_REGISTRY: "false" REGISTER_WITH_EUREKA: "false" EUREKA_DEFAULT_ZONE: "http://192.168.6.121:8761/eureka/" volumes: - /data:/data fms-b-config: image: 172.30.30.24:5000/fms-b-config:1.0.1 ports: - "8881:8881" networks: - "shooter_cluster" privileged: true deploy: restart_policy: condition: on-failure depends_on: - fms-a-center environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=8881 volumes: - /data:/data fms-c-agent: image: 172.30.30.24:5000/fms-c-agent:1.0.1 ports: - "8891:8891" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=8891 volumes: - /data:/data fms-c-oauth2: image: 172.30.30.24:5000/fms-c-oauth2:1.0.1 ports: - "8901:8901" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=8901 volumes: - /data:/data fms-z-activiti: image: 172.30.30.24:5000/fms-z-activiti:1.0.1 ports: - "9041:9041" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9041 volumes: - /data:/data fms-x-webclient: image: 172.30.30.24:5000/fms-x-webclient:1.0.1 ports: - "9001:9001" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9001 volumes: - /data:/data fms-z-system: image: 172.30.30.24:5000/fms-z-system:1.0.1 ports: - "9011:9011" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9011 volumes: - /data:/data fms-z-prebiz: image: 172.30.30.24:5000/fms-z-prebiz:1.0.1 ports: - "9051:9051" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9051 volumes: - /data:/data fms-z-thirdinterface: image: 172.30.30.24:5000/fms-z-thirdinterface:1.0.1 ports: - "9231:9231" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9231 volumes: - /data:/data fms-z-postbiz: image: 172.30.30.24:5000/fms-z-postbiz:1.0.1 ports: - "9061:9061" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9061 volumes: - /data:/data fms-z-archive: image: 172.30.30.24:5000/fms-z-archive:1.0.1 ports: - "9311:9311" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9311 volumes: - /data:/data fms-z-schedule: image: 172.30.30.24:5000/fms-z-schedule:1.0.1 ports: - "9331:9331" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9331 volumes: - /data:/data fms-z-asset: image: 172.30.30.24:5000/fms-z-asset:1.0.1 ports: - "9121:9121" networks: - "shooter_cluster" deploy: restart_policy: condition: on-failure depends_on: - fms-a-center - fms-b-config - fms-c-agent - fms-c-oauth2 environment: - eureka-list=http://192.168.6.121:8761/eureka/ - leadu-profiles-active=dev_2 - server-ip=192.168.6.124 - server.port=9121 volumes: - /data:/data networks: shooter_cluster: external: true configs: rabbitmq_config: file: ./rabbitmq.config definitons_json: file: ./definitions.json |

开发人员接收环境变量参数图

yaml v3版本原始模板

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 |

version: "3" services: redis: image: redis:alpine ports: - "6379" networks: - frontend deploy: replicas: 2 update_config: parallelism: 2 delay: 10s restart_policy: condition: on-failure db: image: postgres:9.4 volumes: - db-data:/var/lib/postgresql/data networks: - backend deploy: placement: constraints: [node.role == manager] vote: image: dockersamples/examplevotingapp_vote:before ports: - 5000:80 networks: - frontend depends_on: - redis deploy: replicas: 2 update_config: parallelism: 2 restart_policy: condition: on-failure result: image: dockersamples/examplevotingapp_result:before ports: - 5001:80 networks: - backend depends_on: - db deploy: replicas: 1 update_config: parallelism: 2 delay: 10s restart_policy: condition: on-failure worker: image: dockersamples/examplevotingapp_worker networks: - frontend - backend deploy: mode: replicated replicas: 1 labels: [APP=VOTING] restart_policy: condition: on-failure delay: 10s max_attempts: 3 window: 120s placement: constraints: [node.role == manager] visualizer: image: dockersamples/visualizer:stable ports: - "8080:8080" stop_grace_period: 1m30s volumes: - "/var/run/docker.sock:/var/run/docker.sock" deploy: placement: constraints: [node.role == manager] networks: frontend: backend: volumes: db-data: |

运行

|

1 2 3 4 5 6 7 8 |

docker-composer run -f dockerr-composer.yml 当yml文件在同级目录下时: docker-composer up -d 删除: docker-composer rm |

yaml中网络设定

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

1.external为true,那么使用docker-compose up -d来启动服务时,首先docker引擎会查找external声明的网络,找到后进行连接。否则会提示错误,当其值为false或者没有external这个选项时,会自动创建一个 [当前目录名称]_emqx-bridge 的网络 networks: shooter_cluster: external: true networks: emqx-bridge: external: false networks: loki: 2. 使用已存在的网络(外部必须定义好了shooter_cluster) networks: default: #自定义网络名称 external: #外部已定义了网卡 name: shooter_cluster #指定已经存在的网络名称 3. networks: backend: #使用指定的网络 backend, 并且设置网络别名为 test1, aliases: #设置网络别名后,可以在其他容器中 ping test1 访问到该容器 - test1 4. 设置网络驱动 networks: emqx-bridge: driver: bridge #设置网络模式为bridge |

- 本文固定链接: https://www.yoyoask.com/?p=3363

- 转载请注明: shooter 于 SHOOTER 发表